Collaborative Robotic Workbench

A team of ICD researchers and ITECH alumnae were finalists in the Kuka Innovation Award 2018: Real World Interaction Challenge, targeting applications for human-robot collaboration outside of typical industrial environments.

The building industry, and in particular wood construction sector, is an ideal scenario for human and robot collaboration. The building industry consumes more than 40% of resources and energy worldwide, and yet it also slow to adapt digital and robotic technologies. Thus, the productivity of the construction sector has been stagnating for decades. Symptomatically, builders must execute complex tasks in unstructured environments without the aid of digital monitoring or robotic precision. Building and construction processes require an incredible amount of dexterity, process knowledge, and craftsmanship, making a purely automated workflow unrealistic. However, the introduction of a collaborative robot to a fabrication and construction process could enable entirely new production protocols and increased system complexity while potentially also increasing both efficiency and quality control.

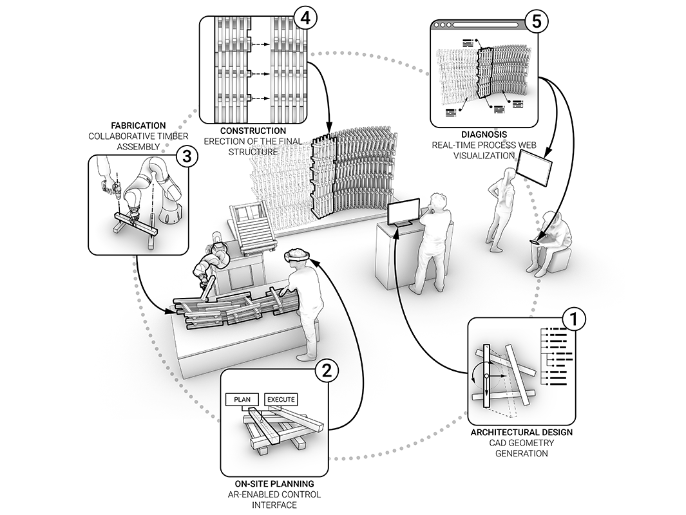

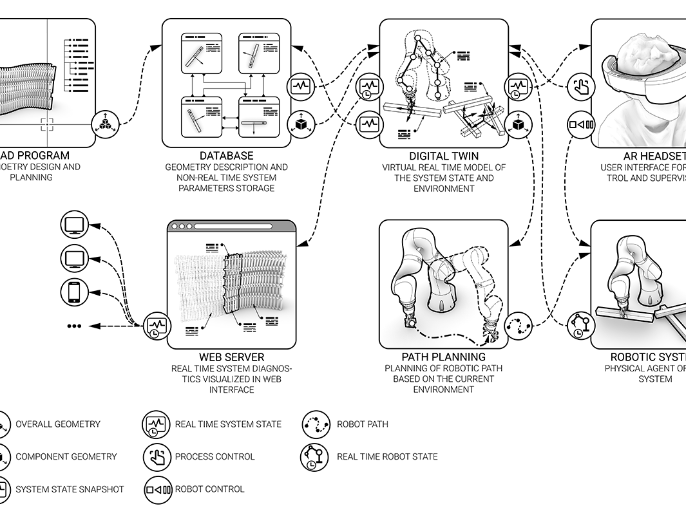

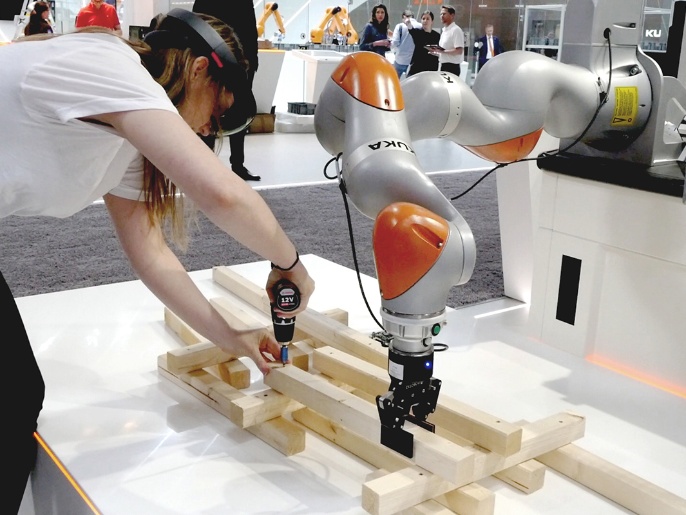

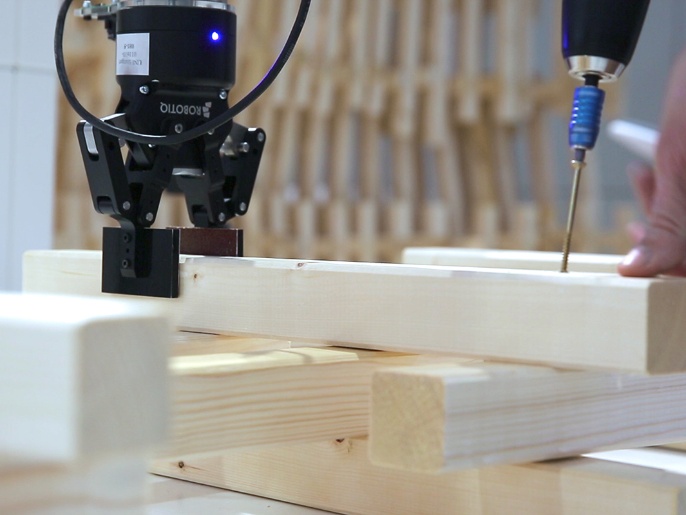

For the Kuka Innovation Award, the team developed a collaborative Robotic workbench: a set of technologies which would enable and enhance Human Robot Collaboration for the building sector. The developed workbench sought to augment the capabilities of builders by giving them direct access to robotic control and superimposed digital information through an augmented reality interface, facilitating an interactive and collaborative robotic building process.

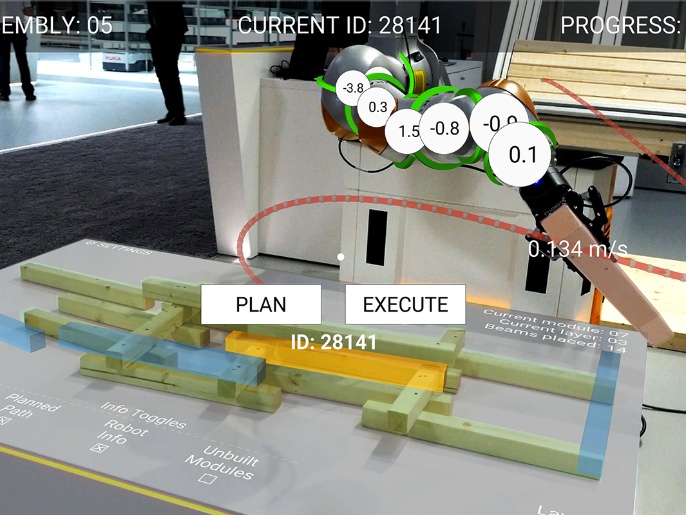

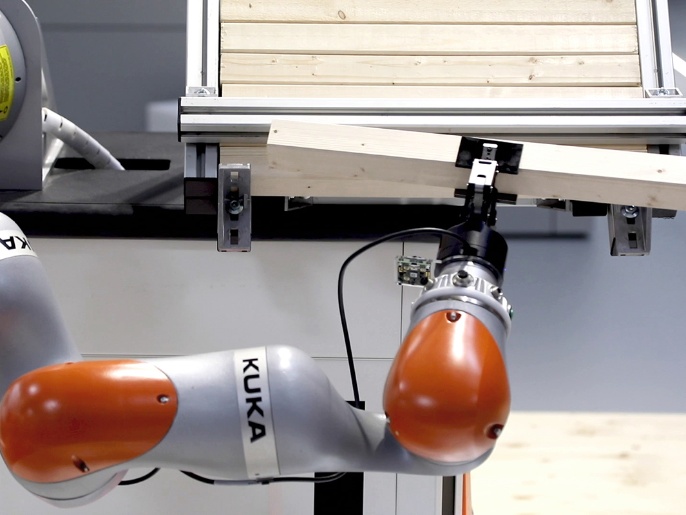

The workbench and a proof- of-concept building process was presented as a live demonstration and exhibit during the 2018 Hannover Messe. During this demonstration, construction tasks were exchanged iteratively between man and robot towards the assembly of a non-standard wooden architectural system. In this workflow, the robot executed precise tasks, such as placing each element, while a builder executed tasks which required dexterity and process knowledge. An augmented reality user interface served as a control layer by which this non expert user or builder could directly manipulate and engage with the robotic process, making informed decisions from both tactile feedback, process knowledge as well as superimposed data and statistics. In this interface, the structure being built could be previewed; the next parts to be fabricated could be selected, and robotic path planning could be done at run time. Process data and feedback, including robotic axis torques, normally only accessible to experts or system programmers, could be superimposed over this view to enhance intuition and diagnosis of system errors. A backend computational engine dispatched system instructions between the various system components, while a custom online platform displayed the current build status for remote monitoring.

Ultimately, the exhibition made a case that the building sector is an incredibly relevant application scenario for human and robot collaborative processes: While building processes have been developed from a human logic of assembly and dexterity, the introduction of robotic precision can open up many possibilities, particularly as a vehicle which connects a digital or computational design process directly to production and construction. In addition, the work suggested the vast potentials in augmented reality and user interfaces as enabling technologies which can make complex robotic processes increasingly more accessible.

PROJECT TEAM

Institute for Computational Design, University of Stuttgart (Prof. Achim Menges)

Bahar Al Bahar

Ondrej Kyjanek

Lauren Vasey

Benedikt Wannemacher

ACKNOWLEDGEMENTS

With the support of Rebecca Duque and Samuel Leder

FUNDING

Autodesk Forge

Kuka Roboter GmbH